Web App LLM Integration Guide for Software Teams

Web apps today need to do more than display information, they must understand users, respond naturally, and deliver personalized experiences in real time. Large language models (LLMs) make this possible, powering everything from smart chat assistants to adaptive content engines.

This guide shows software teams how to integrate LLMs effectively, covering architecture patterns, implementation tips, security best practices, and future trends shaping intelligent web applications. Build smarter, more responsive products that keep users engaged and give your applications a competitive edge.

What Does LLM Integration Mean for Web Applications?

Large language model integration refers to embedding advanced language understanding and generation capabilities into web applications through model APIs. It enables applications to process conversational input, generate contextual output, drive personalization, and automate tasks that previously required manual logic or rigid rule engines.

For software teams building modern web solutions, this integration expands core functionality while maintaining performance, scalability, and operational governance.

Strategic Business Use Cases

1. Conversational Interfaces & Knowledge Assistants

- Handle FAQs and troubleshoot issues automatically.

- Provide context-aware responses for better UX.

- Enable employees to query internal knowledge bases in natural language.

- Support consistent interactions across web, mobile, and chat platforms.

2. Personalized Content Delivery

- Recommend products, articles, or features based on user behavior.

- Summarize long reports or updates for quick consumption.

- Adapt content to user preferences, skill level, or engagement patterns.

3. Developer Productivity Enhancements

- Generate code snippets, APIs, and documentation automatically.

- Detect bugs and suggest improvements in real time.

- Accelerate onboarding and learning for junior developers.

Organizations that implement model‑powered developer productivity tools report up to 55 percent improvement in coding efficiency and release velocity.

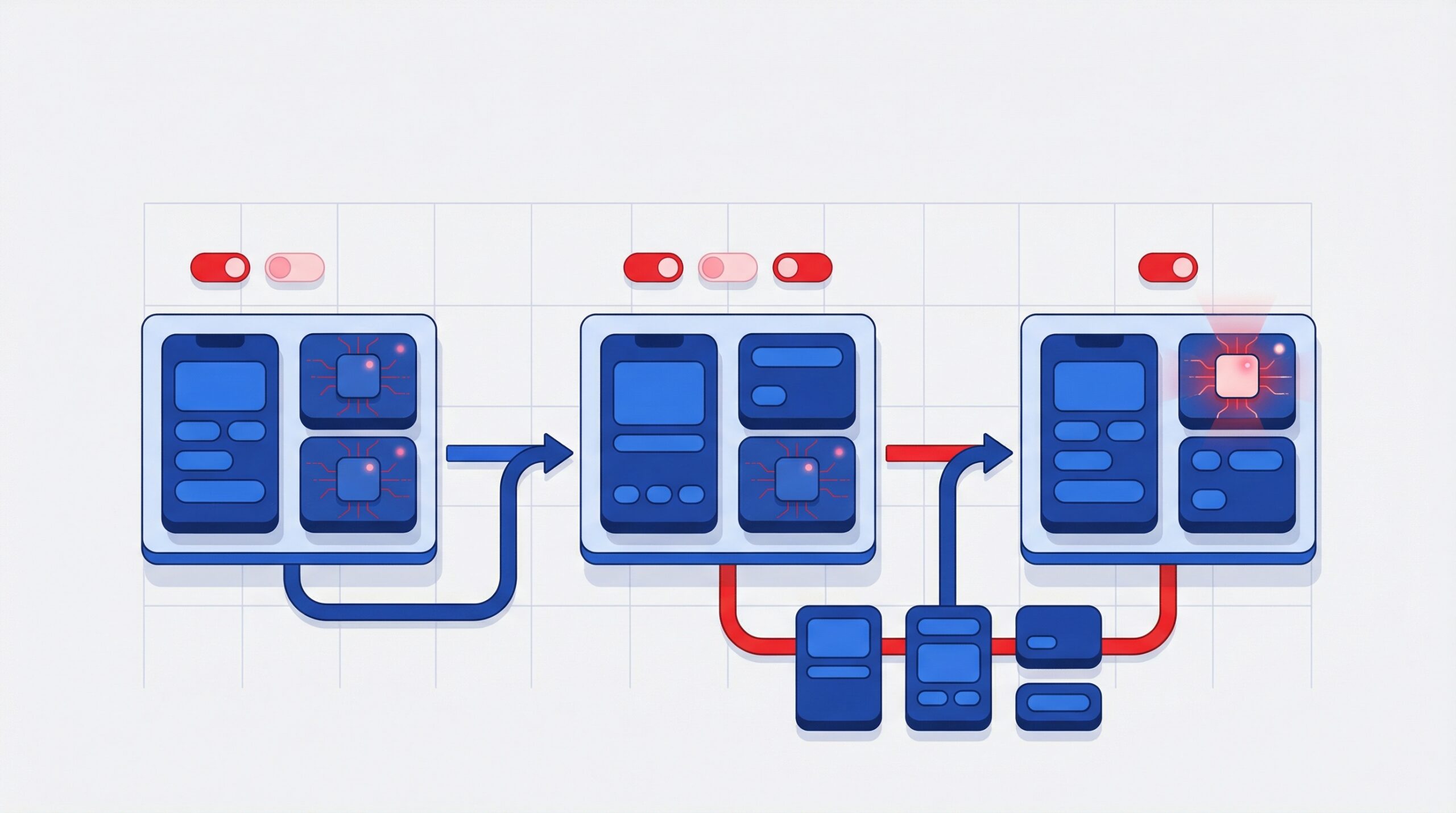

Core Integration Architecture Patterns

API‑First Model Integration

Most software architects adopt REST or GraphQL APIs to connect application layers with model services. Backend systems manage request orchestration, authentication, and data serialization. This approach ensures flexibility, making it easier to swap models, scale usage, and maintain control over integrations as systems evolve.

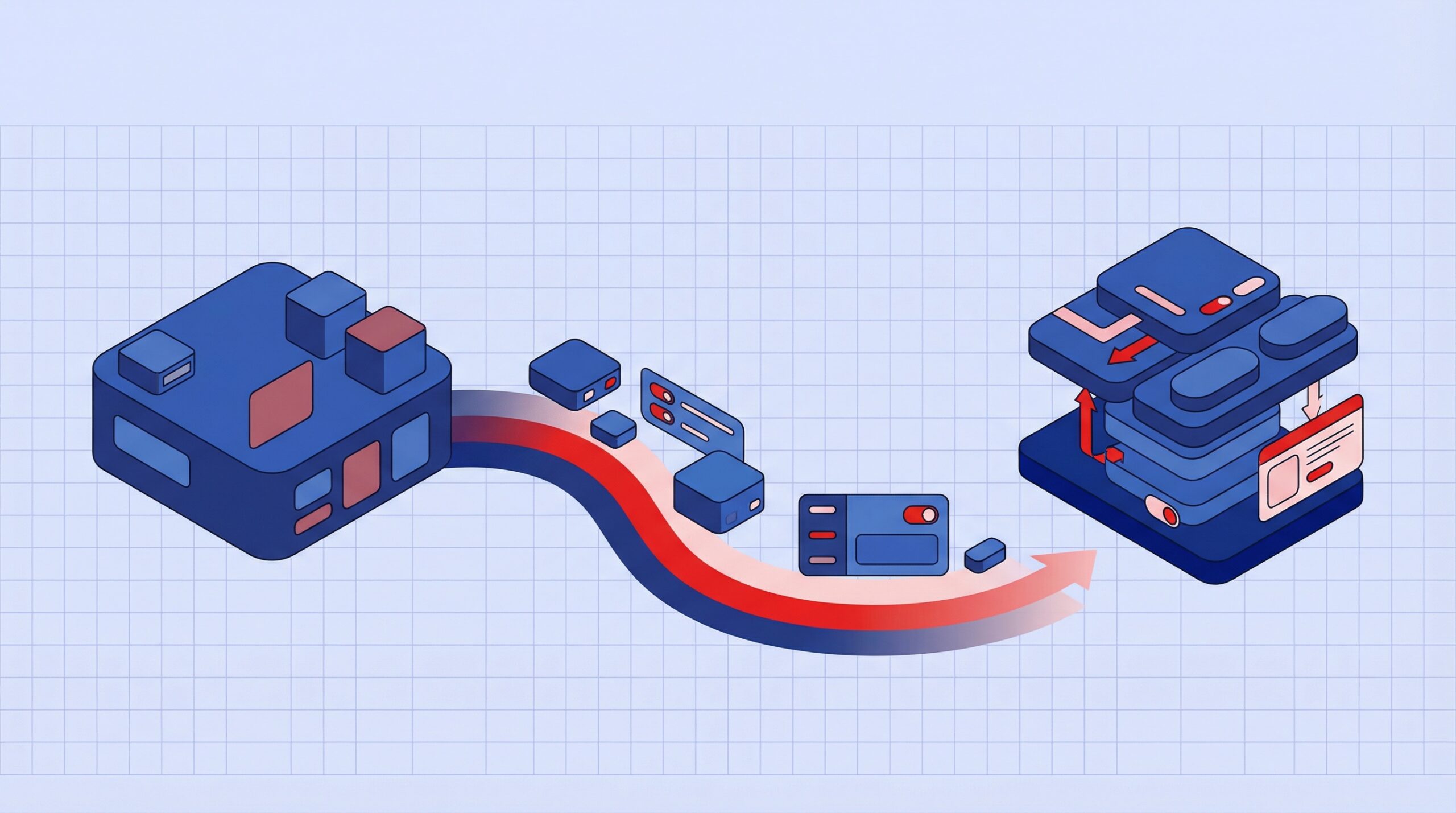

Retrieval‑Augmented Generation (RAG)

To improve accuracy, RAG combines data retrieval from document stores or knowledge bases with model inference. This pattern enhances domain specificity and reduces hallucination risk. It allows applications to generate responses grounded in real time, business specific data rather than relying only on pre trained knowledge.

Middleware and Orchestration Layers

Frameworks such as LangChain and Haystack abstract common workflows, including prompt templates, memory layers, and multi tool execution flows, streamlining LLM adoption without bespoke logic for each use case. These layers simplify development, reduce engineering overhead, and enable faster experimentation with complex multi step AI workflows.

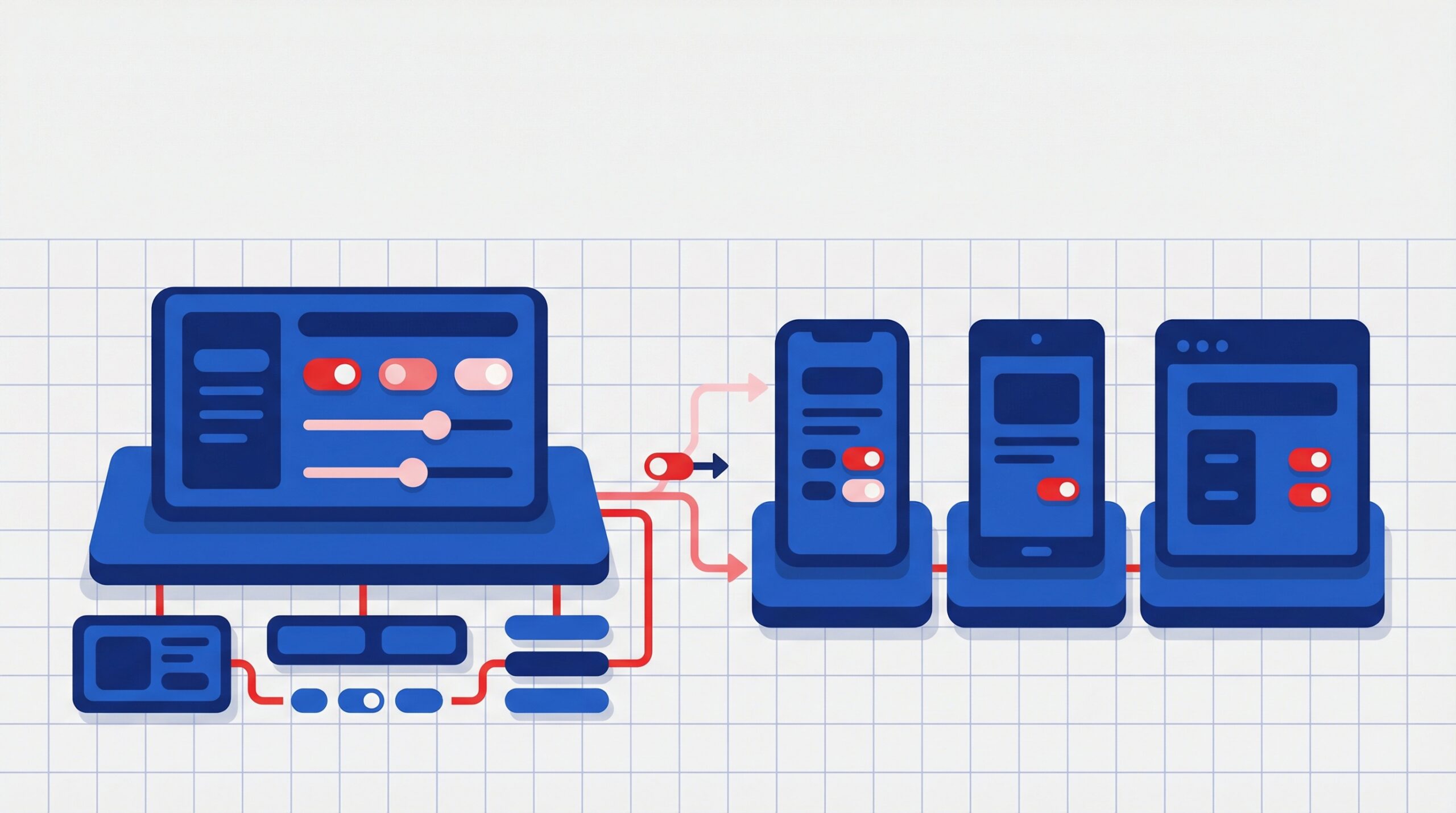

Step‑by‑Step Implementation (Frontend & Backend)

Frontend Integration

Step 1: Route all requests through a backend proxy – Avoid calling model APIs directly from the browser. Send all requests through your backend to secure credentials and control traffic.

Step 2: Design for async interactions – Implement asynchronous handling for prompts and responses to support smooth, real time user experiences, especially for chat or streaming outputs.

Step 3: Build a responsive UI layer – Create interfaces that can handle loading states, partial responses, retries, and fallbacks to ensure a seamless experience even when latency varies.

Step 4: Add caching for efficiency – Cache frequent or repeated prompt responses at the client or edge level to reduce redundant calls, improve speed, and optimize costs.

Backend Microservices

Step 1: Isolate model interactions into services – Encapsulate all LLM calls within dedicated services or functions so they can scale independently and remain easy to maintain.

Step 2: Standardize prompt templates – Define reusable prompt structures to ensure consistency, reduce errors, and improve output quality across different use cases.

Step 3: Enrich context before inference – Inject relevant data from databases, APIs, or knowledge bases to improve response accuracy and make outputs more context aware.

Step 4: Store responses strategically – Persist important interactions for analytics, debugging, and auditing. This also helps in fine tuning prompts and improving system performance over time.

Monitoring and Telemetry

Step 1: Implement structured logging – Capture inputs, outputs, and metadata for every model call to enable traceability and debugging.

Step 2: Track performance metrics – Measure latency, response times, token usage, and error rates to understand system behavior under real conditions.

Step 3: Set up alerts and thresholds – Define thresholds for failures, slow responses, or unusual usage patterns and trigger alerts to ensure quick resolution.

Step 4: Continuously optimize – Use collected data to refine prompts, improve workflows, manage costs, and enhance overall reliability in production.

Security, Privacy and Compliance Considerations

Authentication and Endpoint Security

Use strong API key management, OAuth, or token based authentication for model API access, along with strict permissions and rate limits to protect application surfaces. Regular credential rotation and role based access control further reduce the risk of unauthorized access, while continuous monitoring helps detect and respond to anomalies in real time.

Sensitive Data Protection

Mask or remove personally identifiable information before sending data to models, and ensure teams follow secure prompt design practices to avoid unintended exposure. Applying encryption in transit and at rest, along with clear data handling policies, helps safeguard sensitive information while maintaining trust and compliance.

Regulatory and Governance Standards

Ensure your integration aligns with regulations such as GDPR, CCPA, or HIPAA, and that data residency and retention policies are clearly defined. Maintaining audit trails and regularly reviewing compliance requirements with legal teams helps organizations stay aligned as regulations evolve.

Common Integration Challenges and Mitigation Strategies

Model Hallucinations

Language models may generate plausible but incorrect content. Mitigation includes RAG, automated verification pipelines, and human review flows for mission critical outputs.

- Ground responses with trusted data sources using retrieval based approaches

- Add validation layers to cross check outputs before presenting to users

- Use human in the loop for high impact or sensitive use cases

Adversarial Inputs

Without validation, malformed input could destabilize app behavior. Use input sanitization and structural validation to protect logic paths.

- Implement strict input validation and schema enforcement

- Apply prompt guards to prevent injection and misuse

- Monitor and log unusual patterns to detect potential abuse early

Unexpected Cost Patterns

Uncontrolled production usage can inflate costs. Implement quotas, throttling, and usage dashboards to manage cost.

- Set usage limits and rate controls at API and user levels

- Optimize prompts and responses to reduce token consumption

- Continuously monitor usage metrics and adjust strategies to stay within budget

Future Innovation Trends in Intelligent Web Apps

Multimodal Interaction

Expect web applications to support text, image, and structured data natively as user interfaces evolve. This will enable richer, more intuitive user experiences where users can interact in the way that feels most natural to them. Businesses will be able to unify multiple data types into a single interface, improving both usability and engagement.

Intelligent Workflow Automation

Agentic systems will increasingly orchestrate complex sequences of tasks leveraging external APIs and application logic. These systems will reduce manual intervention by autonomously handling multi step processes across tools and platforms. Over time, they will evolve into decision support layers that not only execute tasks but also recommend optimal actions.

Edge and On Device Processing

For privacy sensitive and offline use cases, model inference will shift toward hybrid cloud and edge setups. This approach will help reduce latency and improve responsiveness, especially for real time applications. It will also give organizations greater control over data handling, supporting stricter compliance and security requirements.

Conclusion

LLM integration is quickly becoming a must have for modern web applications. It’s what turns a standard product into something that feels intuitive, responsive, and genuinely helpful. But getting it right takes more than just connecting to a model. It requires thoughtful architecture, strong security, and a clear focus on real business outcomes.

The teams seeing the most success are the ones treating this as a long term capability, not a quick feature. They’re building systems that can adapt, scale, and continuously improve while staying reliable and cost efficient.

That’s where AcmeMinds comes in. We help companies move beyond experimentation and build production ready AI powered applications that actually deliver value. From architecture to deployment, we bring the experience needed to turn LLM potential into measurable results.

As expectations for smarter software keep rising, the advantage will go to teams who can execute well. With the right strategy and partner, you can build applications that not only keep up but lead.

FAQs

1. What does LLM integration mean for web applications?

LLM integration connects application logic with advanced language processing services to enable natural language interactions, intelligent automation, and adaptive user experiences within modern web applications.

2. What are typical use cases for language models in web apps?

Common use cases include conversational customer support, content generation, personalized user experiences, interpreting analytics data, and assisting developers with documentation or code generation.

3. How can I protect sensitive data when calling model services?

Sensitive data can be protected by implementing data masking, applying strong token governance policies, and removing personally identifiable information before sending requests to external model services.

4. What is Retrieval-Augmented Generation and why use it?

Retrieval-Augmented Generation (RAG) combines information retrieval with language model generation. By fetching relevant domain data before producing responses, RAG improves factual accuracy, context relevance, and reduces hallucinations.

5. How do I optimize performance and control integration costs?

Performance and cost can be optimized through response caching, prompt engineering, batching requests, and monitoring API usage. These practices help reduce latency while keeping model inference costs under control.

6. What are the biggest risks when embedding language capabilities in apps?

Key risks include inaccurate or misleading responses, potential exposure of sensitive data, rising operational costs, and security vulnerabilities. Implementing strong governance, monitoring, and testing processes helps mitigate these risks.