Building Enterprise AI Applications with Vertex AI

Enterprises face increasing pressure to turn data into actionable insights while maintaining security, compliance, and operational efficiency. Organizations are moving beyond experimentation to operational deployment, integrating intelligent systems with legacy software and business processes. Vertex AI provides a cloud-native, managed platform for building, deploying, and governing machine learning models at scale.

This guide explores how Vertex AI enables enterprise-grade applications, details architecture and integration considerations, and provides best practices for successful implementation. It is intended for technical leaders, enterprise architects, and engineering teams responsible for deploying production-ready intelligent systems.

Understanding Vertex AI for Enterprise Workloads

Vertex AI is a managed platform designed to support the entire lifecycle of enterprise machine learning applications. It provides centralized control for model training, evaluation, deployment, and governance while integrating seamlessly with the Google Cloud ecosystem. The platform handles structured, unstructured, and streaming data, supporting high-throughput production inference for distributed applications.

Vertex AI enables enterprises to build reliable, repeatable workflows with features such as:

- Model training and evaluation using AutoML or custom jobs

- Centralized model registry for versioning, lineage, and performance metrics

- Managed endpoints for real-time and batch inference

- Feature store for consistent and secure access to training and inference data

- Pipeline orchestration to coordinate preprocessing, model training, validation, and deployment

The platform addresses common enterprise challenges by centralizing operational control, supporting hybrid workloads, and scaling compute resources efficiently. According to industry adoption data, 87 percent of organizations with production machine learning report measurable improvements in operational efficiency after standardizing on a unified platform for model training, deployment, and monitoring.

Vertex AI ensures enterprise-grade security, monitoring, and governance through:

- Role-based access control and encryption for data at rest and in transit

- Logging, metrics, and alerting for performance monitoring

- Auditability and traceability for regulatory compliance

Enterprise Architecture and Integration Patterns

Enterprise machine learning systems must operate within complex technology environments that include legacy software, cloud platforms, and large data infrastructures. A strong architectural foundation ensures models can access enterprise data, integrate with operational applications, and scale reliably in production environments.

Most organizations adopt modular architecture when deploying machine learning systems. This approach separates data pipelines, model logic, inference services, and application layers. Modular design allows engineering teams to update individual components without disrupting the entire platform while also improving scalability and maintainability.

Several integration patterns are commonly used in enterprise environments.

Microservices based model deployment

Many enterprises deploy models as independent services that expose prediction capabilities through APIs. Applications and internal platforms send requests to these services to retrieve predictions or insights. This design allows models to scale independently and enables teams to update models without affecting other systems.

Event driven processing

Enterprise platforms generate large volumes of operational events such as transactions, user activity, or system alerts. Event driven architectures allow models to analyze these events as they occur. Message brokers distribute events to downstream services, enabling real time analysis and automated responses.

Decoupled data and feature access

Enterprise AI applications rely on reliable data pipelines and feature management systems. Feature stores and centralized data repositories provide consistent access to training and inference data. This ensures that models receive the same feature definitions during development and production, improving prediction reliability.

Together, these architecture patterns support scalable deployments, controlled updates, and operational reliability. They allow machine learning systems to function as integrated components of enterprise software rather than isolated analytical tools.

Data Engineering and Feature Management

High quality data is the foundation of reliable enterprise machine learning applications. Models can only perform well when they are trained and served with accurate, consistent, and well governed data. For this reason, enterprises invest heavily in data engineering processes that prepare and manage the datasets used across machine learning workflows.

Feature management plays an important role in this process. A feature store provides a centralized environment where teams define, store, and reuse features used for model training and inference. This ensures consistency across environments and allows multiple teams to work with the same trusted data definitions. Feature stores also support governance requirements by maintaining access controls, version history, and audit logs for data usage.

Strong data pipelines are equally critical for enterprise deployments. Data engineering workflows should include validation, cleansing, and normalization steps to ensure that models receive reliable inputs. These pipelines must also be resilient and traceable so organizations can monitor how data moves through the system and identify potential issues quickly.

Vertex AI integrates with widely used enterprise data services such as Cloud Storage, BigQuery, and real time streaming platforms. These integrations allow teams to build reproducible feature pipelines, maintain clear data lineage, and apply governance policies across the entire data lifecycle. As a result, organizations can scale machine learning initiatives while maintaining confidence in the data that powers their models.

Model Training, Versioning, and Registry

Enterprise machine learning models must be reliable, reproducible, and continuously monitored throughout their lifecycle. Unlike experimental environments, production systems require clear processes for training, testing, version management, and deployment. Structured model lifecycle management ensures that organizations can track model performance, reproduce results, and safely introduce updates without disrupting business operations.

Vertex AI provides a managed environment that supports scalable training workloads, automated experimentation, and centralized model management. These capabilities allow engineering and data science teams to develop models efficiently while maintaining transparency and governance across the development process.

Model Training at Enterprise Scale

Training enterprise models often requires large datasets and significant compute resources. Vertex AI enables distributed training environments that scale automatically based on workload requirements.

Key training capabilities include:

- Distributed training infrastructure that allows models to train across multiple compute nodes for faster processing

- Hyperparameter tuning to automatically identify optimal model configurations

- Experiment tracking that records training runs, configurations, and results for reproducibility

- Support for custom and automated training approaches depending on the complexity of the use case

These capabilities help organizations reduce development cycles while improving model accuracy and reliability.

Model Versioning and Registry Management

Once models are trained, organizations must maintain clear version control and documentation. A centralized model registry acts as a repository for storing and managing model artifacts.

A model registry typically maintains:

- Model versions and associated training configurations

- Metadata describing datasets, features, and training parameters

- Performance metrics used for evaluation and comparison

- Lineage records that track how each model was created

This centralized system allows teams to compare models, reproduce previous results, and ensure transparency across development cycles.

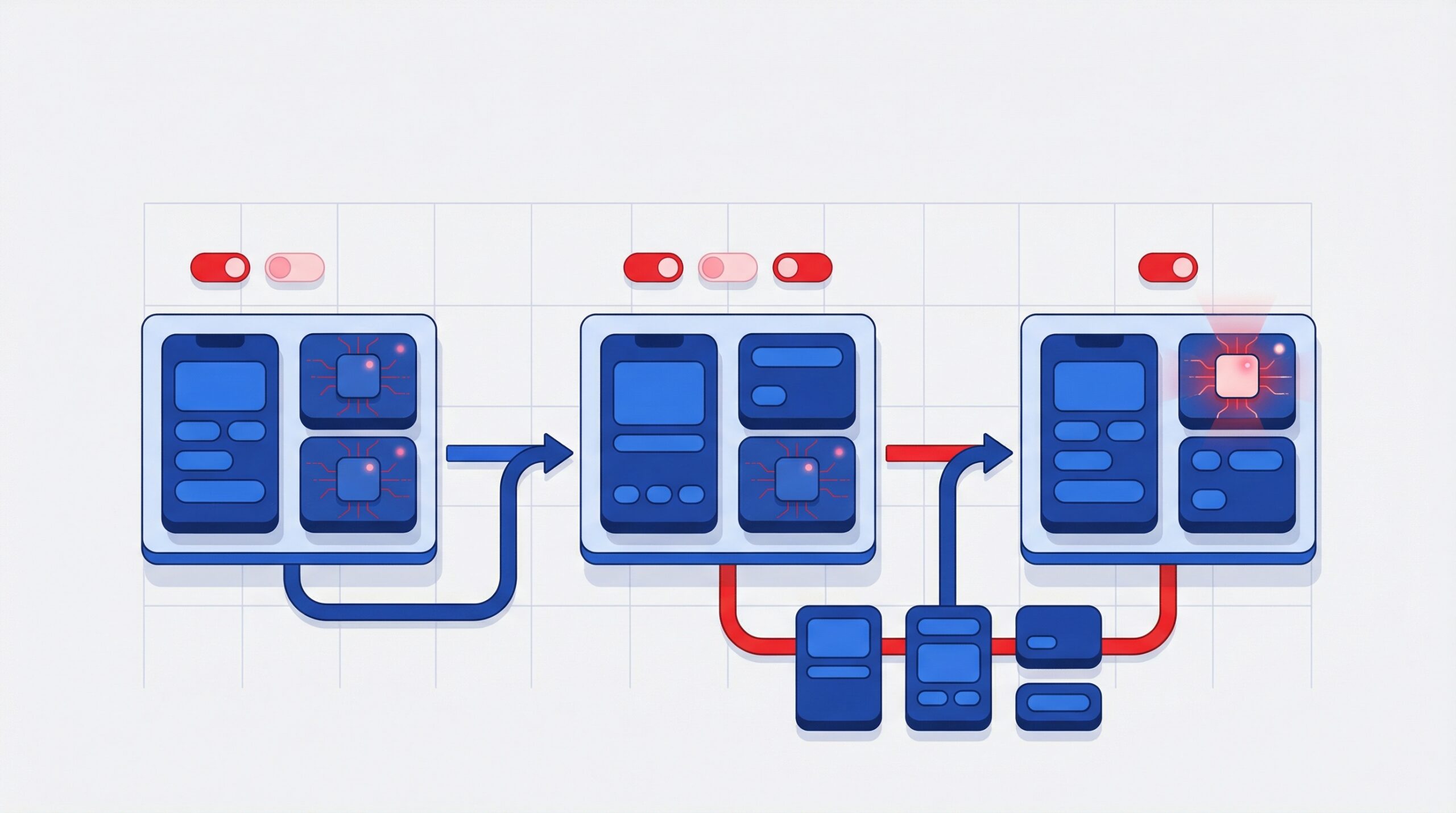

Controlled Deployment and Model Rollouts

Before deploying models into production, organizations must validate performance and monitor potential risks. Controlled deployment strategies allow teams to introduce model updates gradually while observing their behavior.

Common deployment approaches include:

- Canary deployments, where a small percentage of traffic is directed to a new model version

- Shadow testing, where predictions from a new model are evaluated without affecting production outcomes

- Performance monitoring, which tracks accuracy, latency, and operational metrics

These practices ensure that model updates improve system performance without introducing instability or unexpected outcomes in enterprise applications.

Model Deployment and Serving Strategies

After a model is validated, it must be deployed in a way that ensures reliability, scalability, and consistent performance in production environments. Enterprise systems typically support both operational applications that require instant predictions and analytical workloads that process large datasets.

Real time inference

Real time endpoints deliver predictions instantly when an application sends a request. These endpoints are commonly used in customer facing systems where low latency is critical.

Typical use cases include:

- Fraud detection during financial transactions

- Recommendation engines in digital platforms

- Customer support automation

- Risk scoring for operational decisions

To maintain performance, enterprises rely on managed endpoints, load balancing, and continuous monitoring of latency and response times.

Batch inference

Batch processing generates predictions for large datasets at scheduled intervals. This approach is more efficient for analytical workloads that do not require immediate responses.

Common scenarios include:

- Customer segmentation analysis

- Forecasting and reporting

- Large scale data enrichment

Scalability and performance management

Enterprise deployments maintain stability through:

- Autoscaling that adjusts compute resources based on traffic

- Pre warmed instances that reduce latency during sudden demand spikes

- Monitoring tools that track system performance and usage

These deployment strategies allow organizations to deliver reliable predictions while controlling infrastructure costs.

Workflow Orchestration and Operational Best Practices

Workflow orchestration coordinates critical stages such as data preparation, model training, validation, and deployment so that processes remain repeatable and auditable.

Automated pipelines help reduce manual intervention and improve operational efficiency. They ensure that each stage of the workflow follows defined processes, allowing teams to deploy models with greater confidence.

Best practices for workflow orchestration include:

- Automate pipeline stages so preprocessing, training, validation, and deployment follow standardized processes

- Implement continuous integration and deployment practices to validate model performance and data quality before production releases

- Maintain experiment tracking and version control for models, datasets, and configurations

- Monitor model performance and infrastructure metrics to detect anomalies or performance degradation early

- Establish alerting systems that notify engineering teams when data drift, latency issues, or operational failures occur

By following these practices, organizations can ensure their machine learning systems remain stable, transparent, and scalable in production environments.

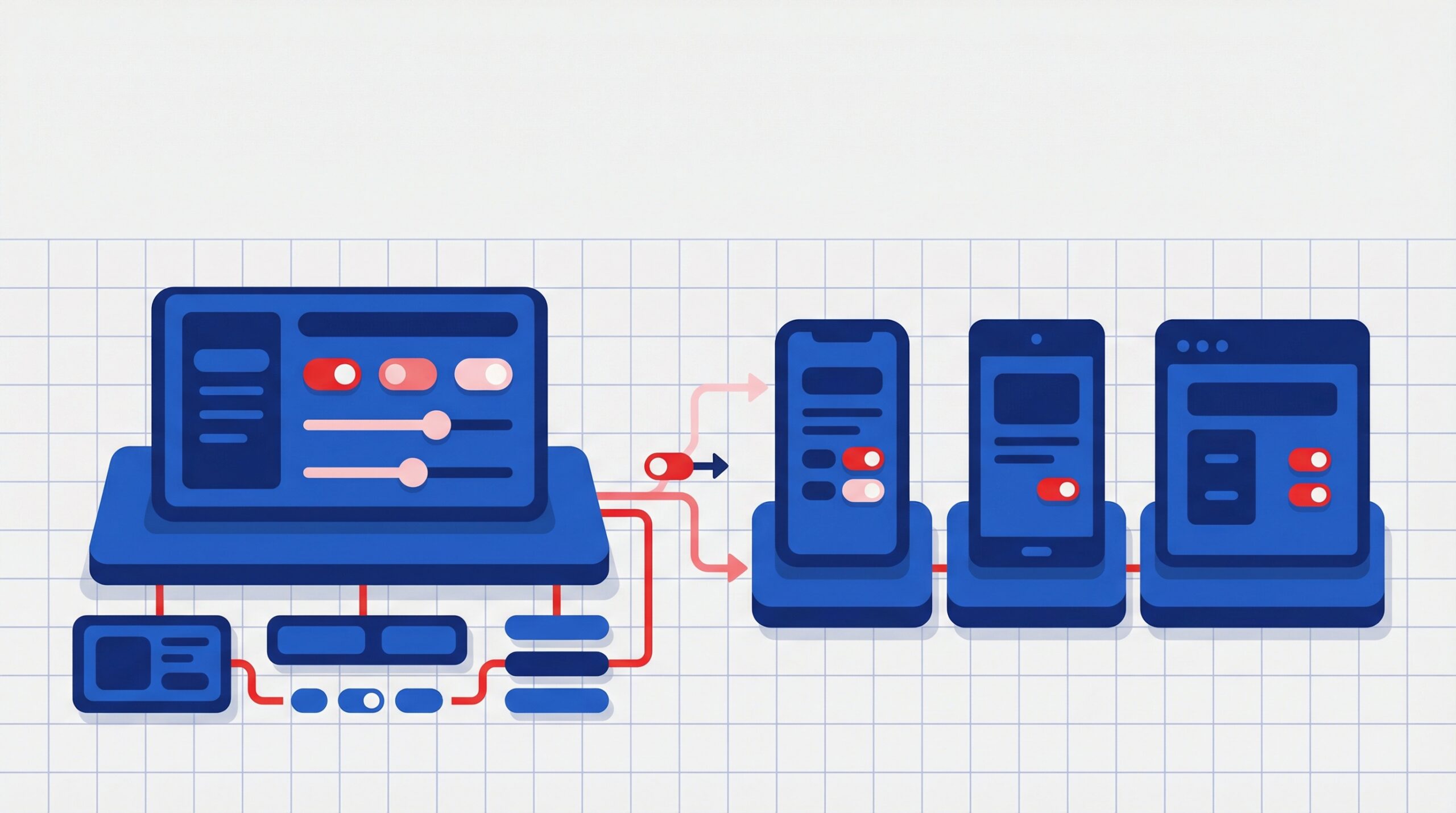

Enterprise System Integration Architectures

Enterprise machine learning systems deliver value only when they integrate with existing business applications. Models must interact with platforms such as CRM systems, ERP software, human resources tools, and operational databases that support daily business processes. Integration ensures that predictions and insights can be consumed directly within enterprise workflows.

Modern architectures use secure APIs and microservices frameworks to connect machine learning services with enterprise applications. These interfaces allow systems to send data to model endpoints and receive predictions that support tasks such as customer analysis, operational decision making, and workflow automation.

Event driven communication is also widely used in enterprise environments. Business events such as transactions, customer interactions, or operational alerts can trigger model predictions in real time. This enables faster decision making and more responsive business processes.

Key integration components commonly used in enterprise environments include:

- API gateways that securely expose model services to enterprise applications

- Microservices frameworks that allow models to operate as independent and scalable services

- Event streaming platforms that process real time business events and trigger predictions

- Standardized adapters that simplify integration with legacy systems and enterprise software

These integration approaches allow machine learning systems to operate as embedded components of enterprise technology ecosystems rather than standalone analytical tools.

Common Challenges and Solutions

Enterprise machine learning deployments often face challenges related to system integration, data reliability, and governance. Legacy enterprise applications can make it difficult to connect models with existing workflows, while inconsistent data pipelines may affect prediction accuracy.

Organizations can address these issues by adopting modular integration approaches and strengthening data management practices. Feature management tools and data validation processes help ensure models receive reliable inputs.

Collaboration between engineering, data science, and business teams also improves adoption. Many enterprises begin with focused pilot programs tied to clear business metrics, which helps validate impact before scaling solutions across the organization.

Strategic Roadmap for Enterprise Deployment

Successful enterprise deployment requires a clear and structured roadmap. Organizations should begin by identifying high impact use cases where machine learning can deliver measurable business outcomes. Starting with focused initiatives allows teams to demonstrate value while building internal expertise.

As adoption grows, it becomes important to establish governance frameworks that manage data usage, feature definitions, and model lifecycle processes. Strong governance ensures transparency, reliability, and compliance across the organization.

Enterprises also benefit from cross functional oversight. Collaboration between data scientists, engineers, security teams, and business leaders helps align technical development with operational priorities.

A well defined roadmap typically includes:

- Selecting high value use cases with clear success metrics

- Establishing governance practices for data and model management

- Creating cross team review processes for oversight and accountability

- Defining performance, cost, and compliance metrics

Following a phased approach allows organizations to scale machine learning initiatives gradually while maintaining operational stability and reducing implementation risks.

Conclusion

Vertex AI enables enterprises to build, deploy, and govern machine learning applications at scale. With strong architecture, reliable data management, clear model lifecycle governance, and effective integration practices, organizations can accelerate time to value while maintaining security and compliance. These capabilities help businesses move from experimentation to production and achieve measurable operational improvements.

Organizations looking to implement or scale enterprise AI can also benefit from expert guidance. Acmeminds AI solutions support enterprises in designing scalable AI architectures, modernizing data workflows, and deploying production ready machine learning systems that deliver real business outcomes.

Resources – 101 gen AI use cases with technical blueprints

FAQs

1. What is Vertex AI used for in enterprises?

Vertex AI supports training, deployment, orchestration, and governance of machine learning models at enterprise scale, enabling organizations to streamline AI initiatives across functions and accelerate time-to-value.

2. How can enterprises deploy models in production?

Vertex AI provides managed real-time and batch endpoints with autoscaling, monitoring, and logging. This ensures reliable production deployment and allows enterprises to handle varying workloads efficiently while maintaining performance.

3. How does Vertex AI integrate with enterprise systems?

Models connect to CRM, ERP, HR, and analytics platforms using APIs, microservices, and event-driven pipelines. This integration allows seamless access to enterprise data and enables AI-powered insights without disrupting existing workflows.

4. How should enterprises govern machine learning models?

Governance includes access control, audit logging, bias and fairness evaluation, and lineage tracking. These practices ensure compliance, maintain model integrity, and provide transparency in AI-driven decision-making.

5. What are enterprise adoption trends for machine learning?

Recent data shows 87 percent of enterprises operate models in production and plan to expand automation across functions. Organizations are increasingly leveraging ML to improve efficiency, decision-making, and customer experience at scale.

6. How can organizations optimize cost when running models?

Optimization strategies include autoscaling, batch inference, caching, and monitoring usage to forecast resource requirements. These approaches help enterprises reduce infrastructure costs while maintaining high performance and reliability.

More on Apps

Cross-Platform vs Native App Development for Enterprises