AI Software Testing Beyond Selenium Automation

Software testing has always been a critical part of delivering reliable digital products, but the expectations from QA teams have changed significantly. With faster release cycles, distributed systems, and continuous deployment models, traditional automation approaches are struggling to keep up.

Selenium, once the default choice for automation testing, is now being complemented and in some cases replaced by AI-driven testing frameworks that offer greater adaptability, reduced maintenance effort, and smarter test execution.

The shift is not just technological. It reflects a broader evolution in how organizations define software quality in modern engineering environments.

Limitations of Traditional Automation Testing

While tools like Selenium have played a foundational role in standardizing UI automation testing, their limitations become more visible as applications scale and modern architectures shift toward dynamic frontends, microservices, and rapid CI/CD-driven delivery cycles.

High maintenance effort in dynamic applications

Traditional automation scripts are tightly coupled with UI structure using locators such as XPath, CSS selectors, and DOM paths. This creates a strong dependency between test cases and the frontend implementation.

In modern applications built with frameworks like React or Angular, UI components are frequently updated due to design iterations, feature toggles, or refactoring. Even minor changes in the DOM structure or element attributes can break multiple test cases simultaneously.

This leads to continuous test maintenance overhead, increased reliance on QA engineers for script updates, and growing automation debt over time, especially in large regression suites.

Slow adaptation to product changes

In Agile and DevOps environments, product changes are frequent and incremental. However, traditional automation testing struggles to keep pace because test cases are typically updated after development is completed. As a result, regression suites often lag behind actual application changes, creating delays in validation cycles.

Flaky test behavior in CI/CD pipelines

Flaky tests are a common challenge in automation pipelines, where tests pass or fail inconsistently without any code changes. These failures are usually caused by timing issues, asynchronous API responses, unstable test environments, or shared test data conflicts.

In CI/CD workflows, flaky tests reduce confidence in automation results and often force teams to rerun pipelines or manually verify failures. Over time, this creates “test noise,” where real defects become harder to identify among inconsistent failures.

This is why many teams are now shifting toward more adaptive and intelligent testing approaches that reduce script fragility and improve resilience in fast-changing software environments.

How AI Is Transforming Software Testing

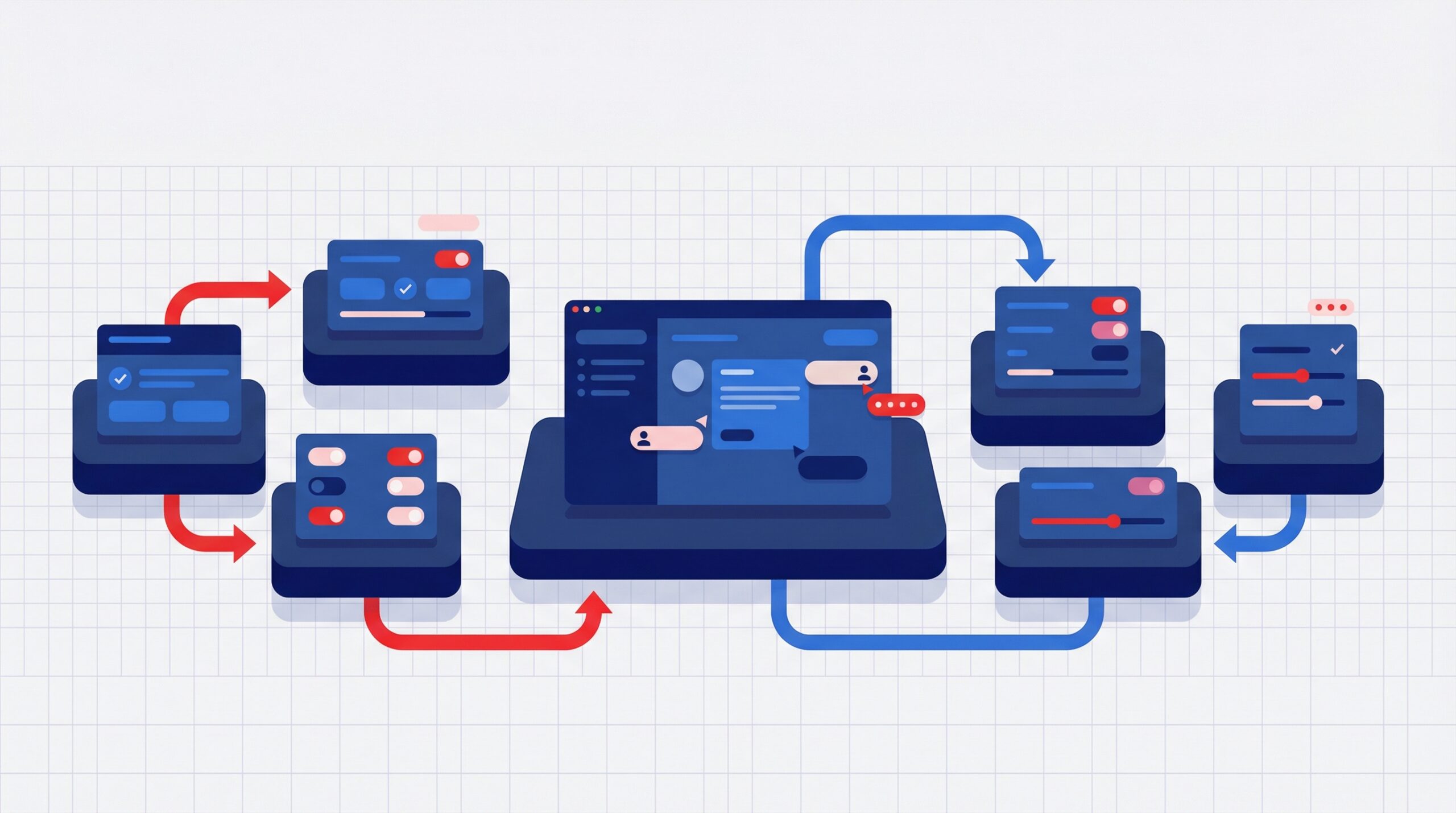

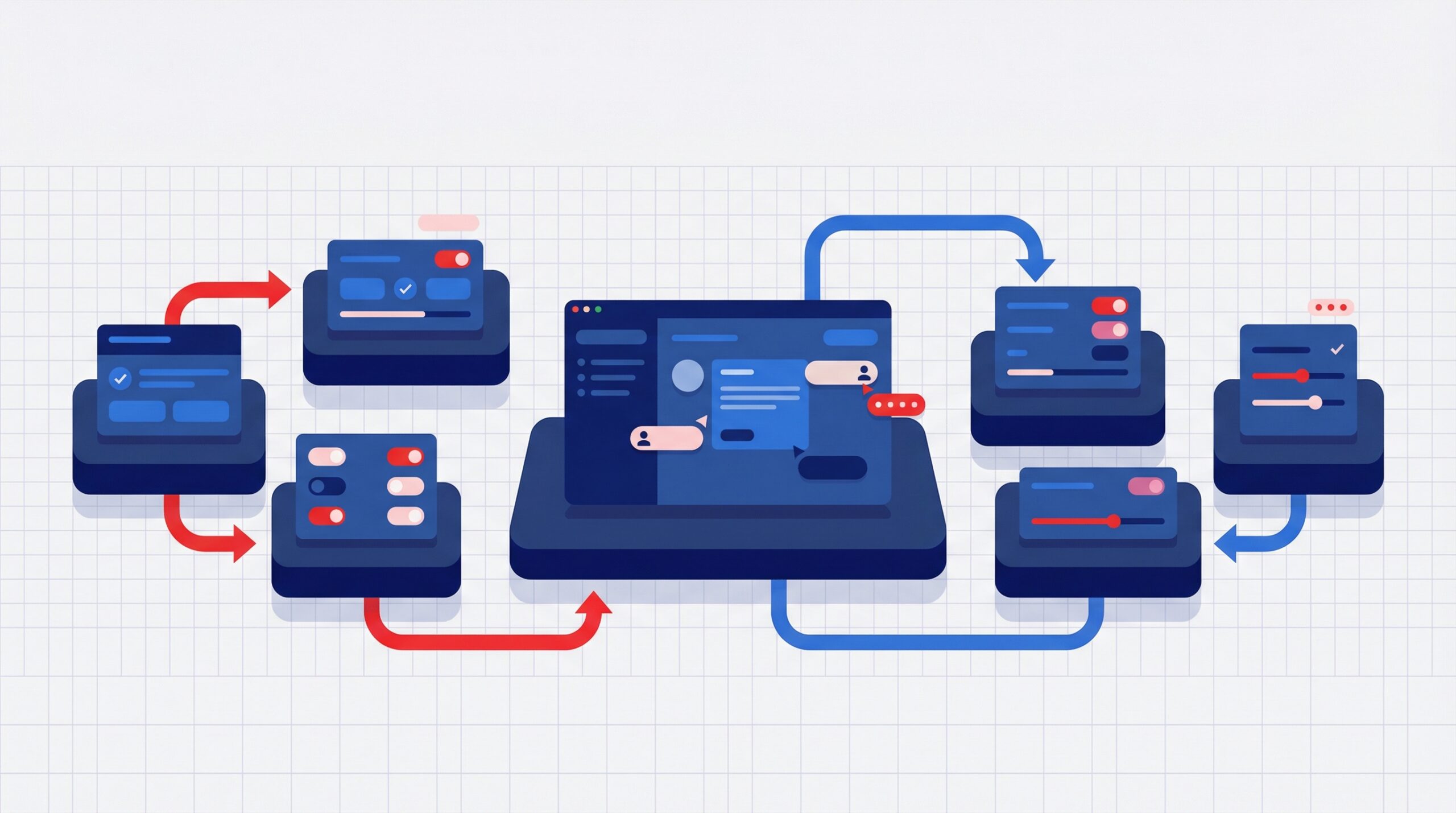

Artificial intelligence is fundamentally shifting software testing from rigid, rule-based execution toward adaptive systems that can make intelligent decisions based on application behavior, historical data, and real-time changes in the software environment.

Self-healing test automation

Traditional automation breaks easily when UI elements change. AI-powered testing tools introduce self-healing capabilities that automatically detect changes in element attributes, DOM structure, or selectors and adjust test execution paths without manual intervention.

This significantly reduces maintenance overhead in fast-moving applications.

Common tools used in this space include:

- Testim – AI-driven test stability and self-healing locators

- mabl – intelligent end-to-end testing with automatic UI adaptation

- Functionize – machine learning-based test automation and healing

- Healenium (open-source) – Selenium extension for self-healing locators

Automated test case generation

AI can generate test cases by analyzing real user behavior, production logs, and historical defect patterns. This ensures test coverage is aligned with how users actually interact with the system rather than only predefined QA assumptions.

Instead of manually writing every scenario, teams can focus on validating high-value workflows while AI expands coverage intelligently.

Popular tools enabling this capability include:

- Autify – AI-based test creation from user flows

- Testim Smart Test Creation – generates tests from recorded actions

- Rainforest QA – crowd + AI-assisted test generation for UX flows

Predictive defect detection

AI models can analyze code commits, change frequency, dependency graphs, and past defect history to identify high-risk areas before deployment. This enables QA teams to prioritize testing where failures are most likely to occur.

This approach shifts QA from reactive bug detection to proactive risk mitigation.

Tools and platforms supporting predictive QA include:

- Launchable – ML-based test prioritization and failure prediction

- SeaLights – AI-driven test impact analysis and coverage optimization

- GitHub Advanced Security insights (via code patterns) – risk signals in CI workflows

According to the Capgemini Research Institute, 60% of organizations report that AI improves testing efficiency and reduces time spent on repetitive QA tasks, highlighting the growing adoption of AI-driven quality engineering practices in modern software development environments.

Interesting read – The Essential Role of Manual Testing in the Era of Automation

AI-Powered Testing in Modern Tooling

Modern software testing ecosystems are increasingly embedding AI capabilities either natively within platforms or through integrations and extensions that enhance automation intelligence. This shift is helping teams move beyond static test execution toward adaptive, context-aware testing systems that align better with continuous delivery models.

AI-assisted execution in frameworks like Playwright

Frameworks such as Playwright are being enhanced with intelligent layers that improve reliability in dynamic applications, particularly where UI behavior changes frequently or timing issues cause inconsistencies.

Rather than relying only on static test scripts, AI-assisted setups help:

- Improve resilience of cross-browser test execution

- Reduce failures caused by minor UI shifts or rendering delays

- Strengthen synchronization handling in asynchronous user flows

This makes end-to-end testing more stable in fast-changing frontend environments without requiring constant script rewrites.

Risk-based test optimization using machine learning

One of the most practical AI applications in testing is smarter test selection. Instead of running entire regression suites for every code change, machine learning models evaluate risk signals and prioritize what actually needs validation.

These signals typically include code change volume, historical defect density, and module complexity.

This approach helps QA teams:

- Reduce redundant test execution in CI cycles

- Focus testing effort on high-risk areas of the application

- Improve overall pipeline efficiency without compromising coverage

Tools like Launchable and SeaLights are commonly used to enable this type of intelligence-driven test optimization.

Smarter decision-making in CI/CD pipelines

AI is also improving how CI/CD pipelines handle testing workflows by introducing context-aware execution strategies. Instead of following fixed pipelines, testing decisions are increasingly driven by real-time insights from code changes and historical performance data.

This allows pipelines to:

- Trigger only relevant subsets of tests based on change impact

- Filter out noise from unstable or low-signal failures

- Improve accuracy in determining whether a build is truly production-ready

By integrating AI into existing testing tools and pipelines, engineering teams achieve more efficient validation cycles, reduced execution overhead, and improved confidence in release decisions. The emphasis is shifting from running more tests to running the right tests at the right time.

Real-World Use Cases of AI in QA

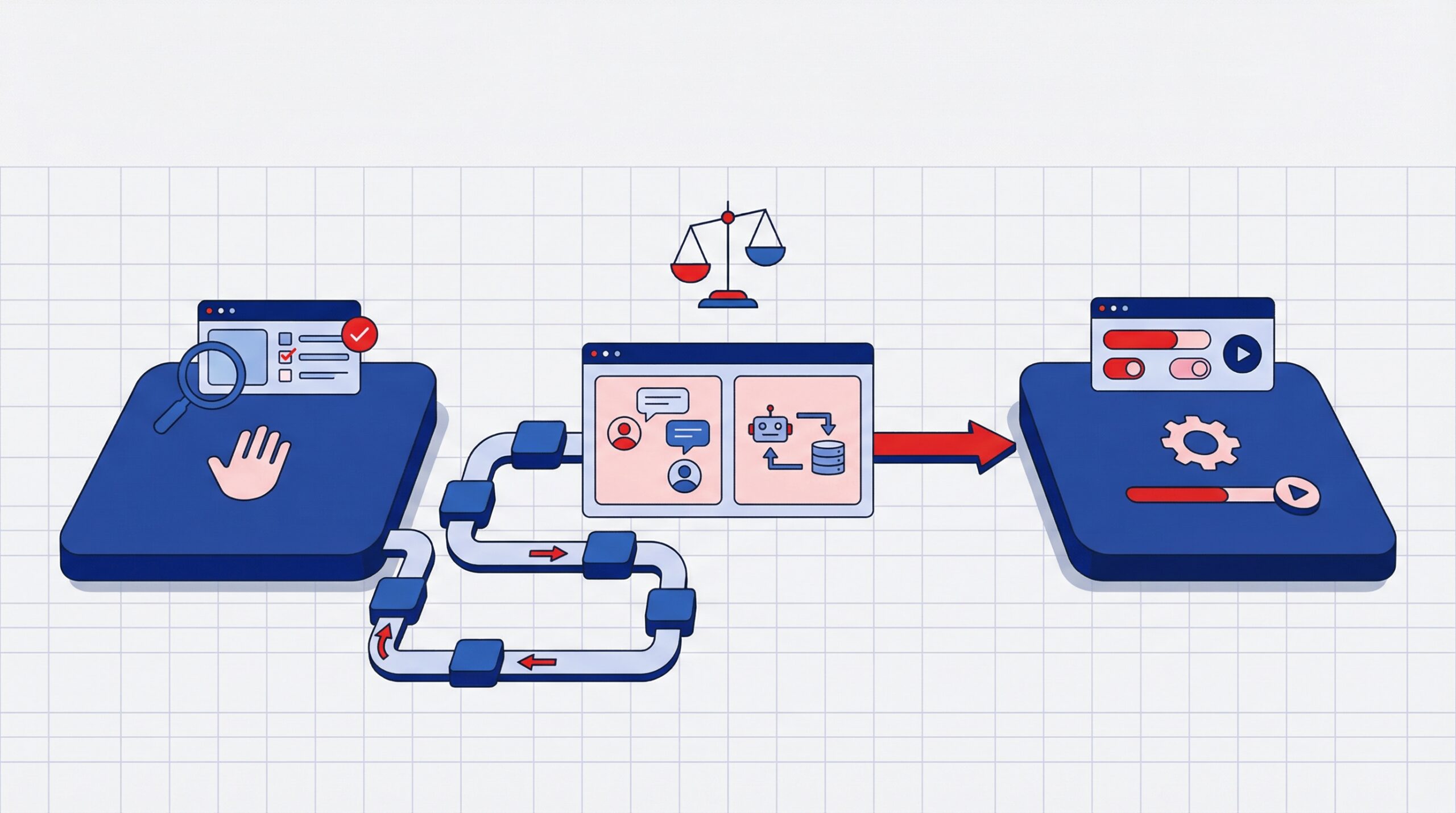

AI in QA is increasingly being used not as a replacement for existing testing frameworks, but as a decision layer that improves how testing is planned, executed, and interpreted in real engineering environments. The impact is most visible in teams operating at scale where release cycles are frequent and system complexity is high.

In many SaaS engineering setups, AI is being used to reduce wasted test execution by analyzing historical pipeline data and identifying patterns in failure distribution. Instead of treating every build the same way, modern systems learn which modules are most likely to break based on recent commits and production incidents. Platforms like Launchable are applied here to intelligently select only the most relevant tests, significantly reducing CI pipeline time in large repositories.

AI is also being used in visual and UI regression workflows where traditional DOM-based testing becomes unreliable. Tools such as Applitools Eyes apply visual AI models to compare UI states at a perceptual level rather than relying only on selectors. This is particularly useful in frontend-heavy applications where layout shifts, responsive design changes, or dynamic rendering make traditional assertions fragile.

In API-heavy architectures, AI is increasingly used to detect behavioral drift across services rather than just functional failures. For example, platforms like Postman API testing with AI-driven monitoring integrations or IBM Instana’s observability intelligence help teams identify anomalies such as unusual latency patterns, inconsistent payload structures, or degraded service interactions that would not be flagged by standard assertion-based tests.

Instead of running static test suites, teams are beginning to rely on systems that prioritize risk, interpret system behavior, and highlight where human attention is actually needed.

Challenges of AI in Software Testing

Despite its advantages, AI-based testing introduces practical challenges that teams need to account for before relying on it in production environments. These challenges are less about capability and more about data quality, reliability, and operational maturity.

Data dependency limitations

AI testing systems depend heavily on high-quality historical data such as past test runs, production logs, defect trends, and user behavior patterns. Without this foundation, the accuracy of predictions and recommendations drops significantly.

In early-stage products or newly launched systems, this data is often sparse or inconsistent. As a result, AI models may struggle to correctly prioritize tests or identify high-risk areas, limiting their effectiveness until enough operational history is built.

False positives and interpretation issues

AI-driven testing tools can sometimes misclassify system behavior, flagging non-issues as failures or missing subtle real defects. This happens when models are not sufficiently trained for a specific application context or when system behavior changes faster than model adaptation.

In CI/CD pipelines, this leads to unnecessary debugging effort, where engineers spend time investigating failures that are not actual bugs. It can also reduce trust in automation results if false alerts occur frequently, making teams more cautious about relying on AI outputs.

Tool maturity and reliability concerns

Although the AI testing ecosystem is growing rapidly, not all tools are mature enough for mission-critical production use. Some platforms still require extensive configuration, validation, and manual oversight to ensure reliable execution.

Even widely adopted tools often work best in a hybrid setup where AI supports decision-making rather than fully controlling test execution. This means human QA oversight is still essential, especially for high-risk releases or complex distributed systems.

Integrate Manual and Automated Testing for Holistic Product Quality Assurance.

Best Practices for Adopting AI Testing

Organizations adopting AI in QA should follow a structured approach rather than replacing existing systems abruptly. AI works best when introduced gradually alongside existing testing practices, allowing teams to build confidence in its outputs while maintaining release stability.

- Start with a hybrid testing approach combining manual testing, traditional automation, and AI-based validation to avoid disrupting existing QA workflows.

- Focus AI testing on high-impact user journeys first such as login, payments, and core business flows where failure risk is highest.

- Integrate AI gradually into existing CI/CD pipelines instead of rebuilding processes, allowing teams to validate stability and outcomes step by step.

- Continuously monitor AI outputs and refine models based on real execution data to improve accuracy and reduce false predictions over time.

For more, read – Software Testing Best Practices

Future of Quality Assurance Engineering

Quality Assurance is evolving rapidly as software delivery becomes faster, more complex, and increasingly AI-driven. It is shifting from manual execution-focused testing to intelligent quality engineering that is embedded directly into development workflows.

- QA is shifting from execution-heavy testing to a broader quality engineering role focused on designing intelligent validation systems rather than maintaining static test scripts.

- Testing teams will increasingly build and manage automated quality frameworks that are adaptive, data-driven, and tightly integrated with development workflows.

- Future QA pipelines will include more autonomous testing capabilities where systems can execute, analyze, and optimize test runs with minimal human intervention.

- This evolution is positioning QA as a strategic function in software development that directly influences product reliability, speed of delivery, and engineering decisions.

Conclusion

AI is not replacing software testing. It is changing how testing is planned, executed, and scaled across modern engineering teams. It moves QA from repetitive execution to intelligent validation and better decision making.

Organizations that adopt AI driven testing early can achieve faster release cycles, improved application stability, and lower effort in maintaining large automation suites.

The shift from traditional Selenium based automation to AI assisted testing is a natural step toward more scalable and adaptive quality engineering.

At AcmeMinds, we help teams make this transition through our automation testing services. We design intelligent, scalable test automation frameworks that combine traditional reliability with modern AI driven practices, helping businesses improve speed, accuracy, and confidence in every release.

FAQs

1. Is AI replacing traditional software testing tools like Selenium?

No. AI is enhancing traditional testing tools like Selenium by improving adaptability, reducing script maintenance, and accelerating test execution. Most organizations use AI alongside existing automation frameworks rather than fully replacing them.

2. What is self-healing in automation testing?

Self-healing in automation testing refers to AI’s ability to automatically detect UI or application changes and update test scripts without manual intervention. This helps reduce test failures caused by small frontend modifications.

3. How does AI improve test coverage?

AI improves test coverage by analyzing production data, user behavior, and application workflows to generate smarter test scenarios that reflect real-world usage patterns. This helps teams identify edge cases and reduce testing gaps.

4. Is AI testing suitable for small teams or startups?

Yes. AI-driven testing can benefit startups and small teams by reducing repetitive testing effort and improving release speed. However, it is usually most effective when combined with traditional automation strategies in a hybrid testing model.

5. What are the risks of using AI in testing?

The main risks of AI-powered testing include inaccurate predictions, poor-quality training data, false positives, and limited transparency in AI decision-making models. Organizations still need human validation for critical testing scenarios.

6. Which industries benefit most from AI-driven testing?

Industries such as SaaS, fintech, e-commerce, and enterprise software benefit significantly from AI-driven testing because they release frequent updates, manage complex workflows, and require faster quality assurance cycles.

More on DevOps

Manual vs Automated Testing: Choose the Best Fit for Your Product